When an outage affects a component of the internet infrastructure, there can often be downstream ripple effects affecting other components or services, either directly or indirectly. We would like to share our observations of this impact in the case of two recent such outages, measured at various levels of the DNS hierarchy, and discuss the resultant increase in query volume due to the behavior of recursive resolvers.

During the beginning of October 2021, the internet saw two significant outages, affecting Facebook’s services and the .club top level domain, both of which did not properly resolve for a period of time. Throughout these outages, Verisign and other DNS operators reported significant increases in query volume. We provided consistent responses throughout, with the correct delegation data pointing to the correct nameservers.

While these higher query rates do not impact Verisign’s ability to respond, they raise a broader operational question – whether the repeated nature of these queries, indicative of a lack of negative caching, might potentially be mistaken for a denial-of-service attack.

On Oct. 4, 2021, Facebook experienced a widespread outage, lasting nearly six hours. During this time most of its systems were unreachable, including those that provide Facebook’s DNS service. The outage impacted facebook.com, instagram.com, whatsapp.net and other domain names.

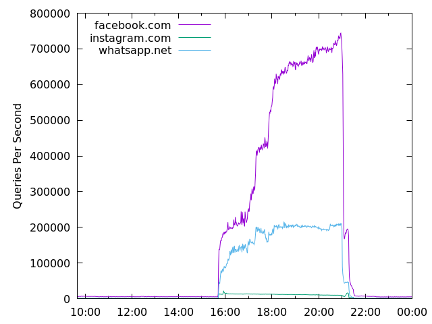

Under normal conditions, the .com and .net authoritative name servers answer about 7,000 queries per second in total for the three domain names previously mentioned. During this particular outage, however, query rates for these domain names reached upwards of 900,000 queries per second (an increase of more than 100x), as shown in Figure 1 below.

Figure 1: Rate of DNS queries for Facebook’s domain names during the 10/4/21 outage.

During this outage, recursive name servers received no response from Facebook’s name servers – instead, those queries timed out. In situations such as this, recursive name servers generally return a SERVFAIL or “server failure” response, presented to end users as a “this site can’t be reached” error.

Figure 1 shows an increasing query rate over the duration of the outage. Facebook uses relatively low Time-to-Lives (TTLs), a setting that tells DNS resolvers how long to cache an answer on their DNS records before issuing a new query, of from one to five minutes. This in turn means that, five minutes into the outage, all relevant records would have expired from all recursive resolver caches – or at least from those that honor the publisher’s TTLs. It is not immediately clear why the query rate continues to climb throughout the outage, nor whether it would eventually have plateaued had the outage continued.

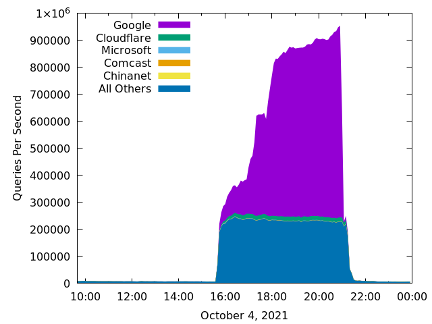

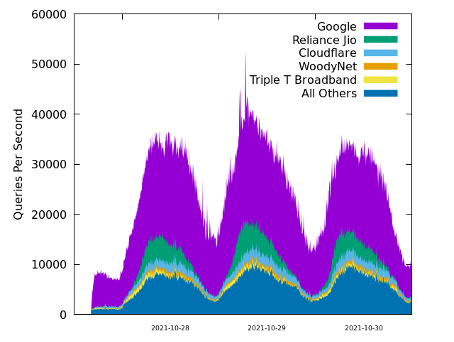

To get a sense of where the traffic comes from, we group query sources by their autonomous system number. The top five autonomous systems, along with all others grouped together, are shown in Figure 2.

Figure 2: Rate of DNS queries for Facebook’s domain names grouped by source autonomous system.

From Figure 2 we can see that, at their peak, queries for these domain names to Verisign’s .com and .net authoritative name servers from the most active recursive resolvers – those of Google and Cloudflare – increased around 7,000x and 2,000x respectively over their average non-outage rates.

.club

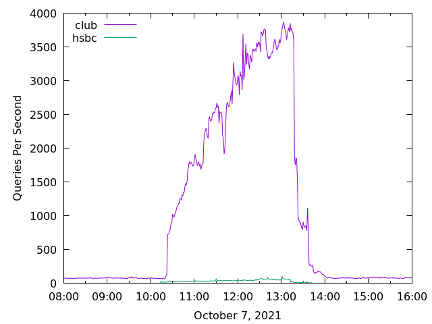

On Oct. 7th, 2021, three days after Facebook’s outage, the .club and .hsbc TLDs also experienced a three-hour outage. In this case, the relevant authoritative servers remained reachable, but responded with SERVFAIL messages. The effect on recursive resolvers was essentially the same: Since they did not receive useful data, they repeatedly retried their queries to the parent zone. During the incident, the Verisign-operated A-root and J-root servers observed an increase in queries for .club domain names of 45x, from 80 queries per second before, to 3,700 queries per second during the outage.

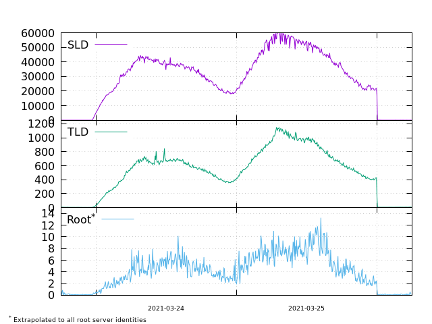

Figure 3: Rate of DNS queries to A and J root servers during the 10/7/2021 .club outage.

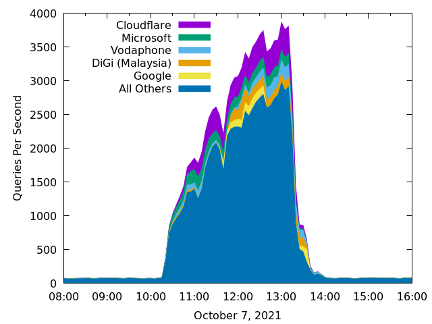

Similar to the previous example, this outage also demonstrated an increasing trend in query rate during the duration of the outage. In this case, it might be explained by the fact that the records for .club’s delegation in the root zone use two-day TTLs. However, the theoretical analysis is complicated by the fact that authoritative name server records in child zones use longer TTLs (six days), while authoritative name server address records use shorter TTLs (10 minutes). Here we do not observe a significant amount of query traffic from Google sources; instead, the increased query volume is largely attributable to the long tail of recursive resolvers in “All Others.”

Figure 4: Rate of DNS queries for .club and .hsbc, grouped by source autonomous system.

Botnet Traffic

Earlier this year Verisign implemented a botnet sinkhole and analyzed the received traffic. This botnet utilizes more than 1,500 second-level domain names, likely for command and control. We observed queries from approximately 50,000 clients every day. As an experiment, we configured our sinkhole name servers to return SERVFAIL and REFUSED responses for two of the botnet domain names.

When configured to return a valid answer, each domain name’s query rate peaks at about 50 queries per second. However, when configured to return SERVFAIL, the query rate for a single domain name increases to 60,000 per second, as shown in Figure 5. Further, the query rate for the botnet domain name also increases at the TLD and root name servers, even though those services are functioning normally and data relevant to the botnet domain name has not changed – just as with the two outages described above. Figure 6 shows data from the same experiment (although for a different date), colored by the source autonomous system. Here, we can see that approximately half of the increased query traffic is generated by one organization’s recursive resolvers.

Figure 5: Query rates to name servers for one domain name experimentally configured to return SERVFAIL.

Figure 6: Query rates to botnet sinkhole name servers, grouped by autonomous system, when one domain name is experimentally configured to return SERVFAIL.

Common Threads

These two outages and one experiment all demonstrate that recursive name servers can become unnecessarily aggressive when responses to queries are not received due to connectivity issues, timeouts, or misconfigurations.

In each of these three cases, we observe significant query rate increases from recursive resolvers across the internet – with particular contributors, such as Google Public DNS and Cloudflare’s resolver, identified on each occasion.

Often in cases like this we turn to internet standards for guidance. RFC 2308 is a 1998 Standards Track specification that describes negative caching of DNS queries. The RFC covers name errors (e.g., NXDOMAIN), no data, server failures, and timeouts. Unfortunately, it states that negative caching for server failures and timeouts is optional. We have submitted an Internet-Draft that proposes updating RFC 2308 to require negative caching for DNS resolution failures.

We believe it is important for the security, stability and resiliency of the internet’s DNS infrastructure that the implementers of recursive resolvers and public DNS services carefully consider how their systems behave in circumstances where none of a domain name’s authoritative name servers are providing responses, yet the parent zones are providing proper referrals. We feel it is difficult to rationalize the patterns that we are currently observing, such as hundreds of queries per second from individual recursive resolver sources. The global DNS would be better served by more appropriate rate limiting, and algorithms such as exponential backoff, to address these types of cases we’ve highlighted here. Verisign remains committed to leading and contributing to the continued security, stability and resiliency of the DNS for all global internet users.

This piece was co-authored by Verisign Fellow Duane Wessels and Verisign Distinguished Engineers Matt Thomas and Yannis Labrou.